Lesson 4

Pre-lecture materials

Sentence Structure (article): https://www.nltk.org/book/ch08.html

Generative Grammar (article): https://www.thoughtco.com/what-is-generative-grammar-1690894

Linguistic Data and Unlimited Possibilities

Let’s Make the thought experiment that we have a gigantic corpus consisting of everything that has been either uttered or written in English over, say, the last 50 years. Would we be justified in calling this corpus “the language of modern English”? There are a number of reasons why we might answer No. Accordingly, we can argue that the “modern English” is not equivalent to the very big set of word sequences in our imaginary corpus. Speakers of English can make judgements about these sequences, and will reject some of them as being ungrammatical. Equally, it is easy to compose a new sentence and have speakers agree that it is perfectly good English. For example, sentences have an interesting property that they can be embedded inside larger sentences. Consider the following sentences: a. Usain Bolt broke the 100m record b. The Jamaica Observer reported that Usain Bolt broke the 100m record c. Andre said The Jamaica Observer reported that Usain Bolt broke the 100m record d. I think Andre said the Jamaica Observer reported that Usain Bolt broke the 100m record

If we replaced whole sentences with the symbol S, we would see patterns like Andre said S and I think S. These are templates for taking a sentence and constructing a bigger sentence. There are other templates we can use, like S but S, and S when S. With a bit of ingenuity we can construct some really long sentences using these templates. Here’s an impressive example from a Winnie the Pooh story by A.A. Milne, In which Piglet is Entirely Surrounded by Water: [You can imagine Piglet’s joy when at last the ship came in sight of him.] In after-years he liked to think that he had been in Very Great Danger during the Terrible Flood, but the only danger he had really been in was the last half-hour of his imprisonment, when Owl, who had just flown up, sat on a branch of his tree to comfort him, and told him a very long story about an aunt who had once laid a seagull’s egg by mistake, and the story went on and on, rather like this sentence, until Piglet who was listening out of his window without much hope, went to sleep quietly and naturally, slipping slowly out of the window towards the water until he was only hanging on by his toes, at which moment, luckily, a sudden loud squawk from Owl, which was really part of the story, being what his aunt said, woke the Piglet up and just gave him time to jerk himself back into safety and say, “How interesting, and did she?” when — well, you can imagine his joy when at last he saw the good ship, Brain of Pooh (Captain, C. Robin; 1st Mate, P. Bear) coming over the sea to rescue him… This long sentence actually has a simple structure that begins S but S when S. We can see from this example that language provides us with constructions which seem to allow us to extend sentences indefinitely. It is also striking that we can understand sentences of arbitrary length that we’ve never heard before: it’s not hard to concoct an entirely novel sentence, one that has probably never been used before in the history of the language, yet all speakers of the language will understand it. The purpose of a grammar is to give an explicit description of a language. But the way in which we think of a grammar is closely intertwined with what we consider to be a language. Is it a large but finite set of observed utterances and written texts? Is it something more abstract like the implicit knowledge that competent speakers have about grammatical sentences? Or is it some combination of the two? We won’t take a stand on this issue, but instead will introduce the main approaches. In this chapter, we will adopt the formal framework of “generative grammar”, in which a “language” is considered to be nothing more than an enormous collection of all grammatical sentences, and a grammar is a formal notation that can be used for “generating” the members of this set. Grammars use recursive productions of the form S → S and S, as we will explore in 3. In 10. we will extend this, to automatically build up the meaning of a sentence out of the meanings of its parts. What’s the Use of Syntax? Beyond n-grams Here’s another pair of examples: a. The worst part and clumsy looking for whoever heard light b. He roared with me the pail slip down his back

You intuitively know that these sequences are “word-salad”, but you probably find it hard to pin down what’s wrong with them. One benefit of studying grammar is that it provides a conceptual framework and vocabulary for spelling out these intuitions. Let’s take a closer look at the sequence the worst part and clumsy looking. This looks like a coordinate structure, where two phrases are joined by a coordinating conjunction such as and, but or or. Here’s an informal (and simplified) statement of how coordination works syntactically: Coordinate Structure: If v1 and v2 are both phrases of grammatical category X, then v1 and v2 is also a phrase of category X. Here are a couple of examples. In the first, two NPs (noun phrases) have been conjoined to make an NP, while in the second, two APs (adjective phrases) have been conjoined to make an AP. a. The book’s ending was (NP the worst part and the best part) for me. b. On land they are (AP slow and clumsy looking).

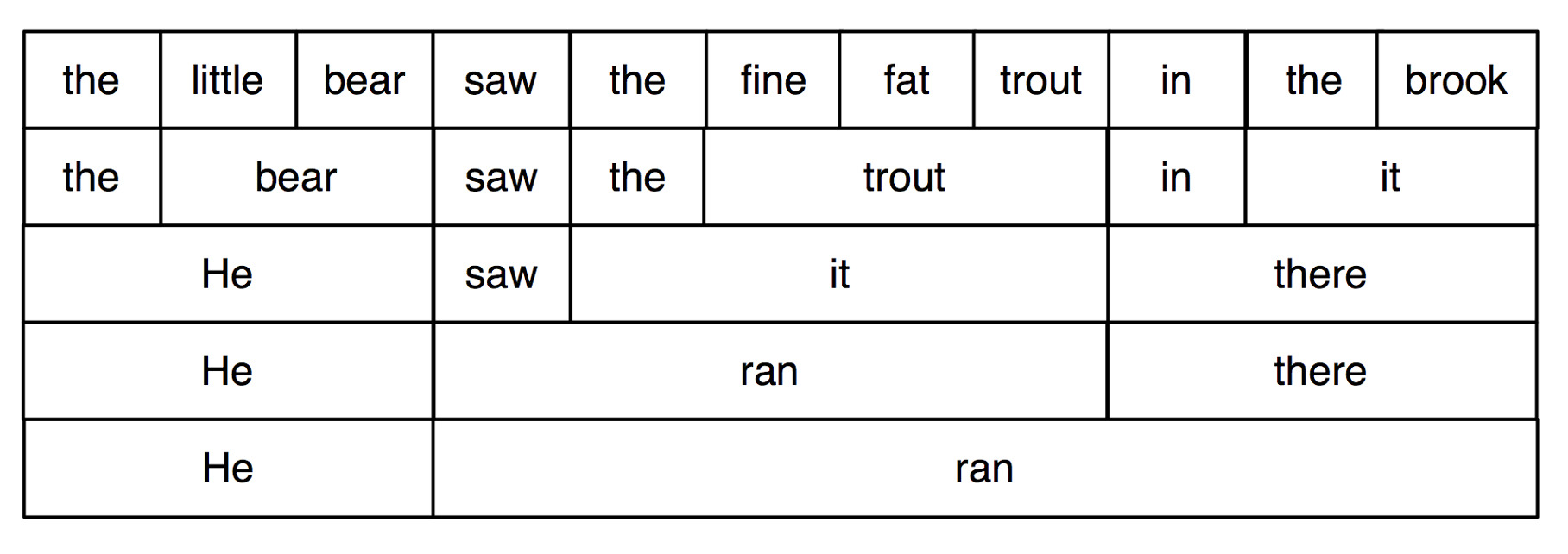

What we can’t do is conjoin an NP and an AP, which is why the worst part and clumsy looking is ungrammatical. Before we can formalize these ideas, we need to understand the concept of constituent structure. Constituent structure is based on the observation that words combine with other words to form units. The evidence that a sequence of words forms such a unit is given by substitutability — that is, a sequence of words in a well-formed sentence can be replaced by a shorter sequence without rendering the sentence ill-formed. To clarify this idea, consider the following sentence: The little bear saw the fine fat trout in the brook. The fact that we can substitute He for The little bear indicates that the latter sequence is a unit. By contrast, we cannot replace little bear saw in the same way. a. He saw the fine fat trout in the brook. b. *The he the fine fat trout in the brook.

we systematically substitute longer sequences by shorter ones in a way which preserves grammaticality. Each sequence that forms a unit can in fact be replaced by a single word, and we end up with just two elements.

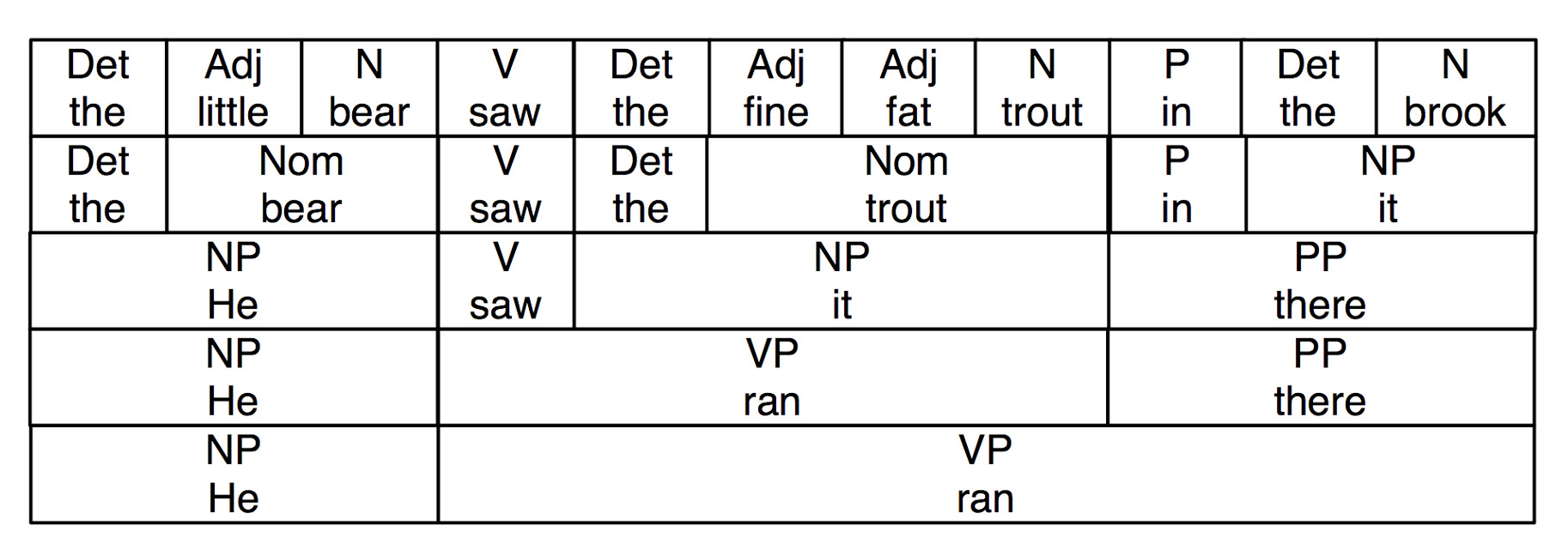

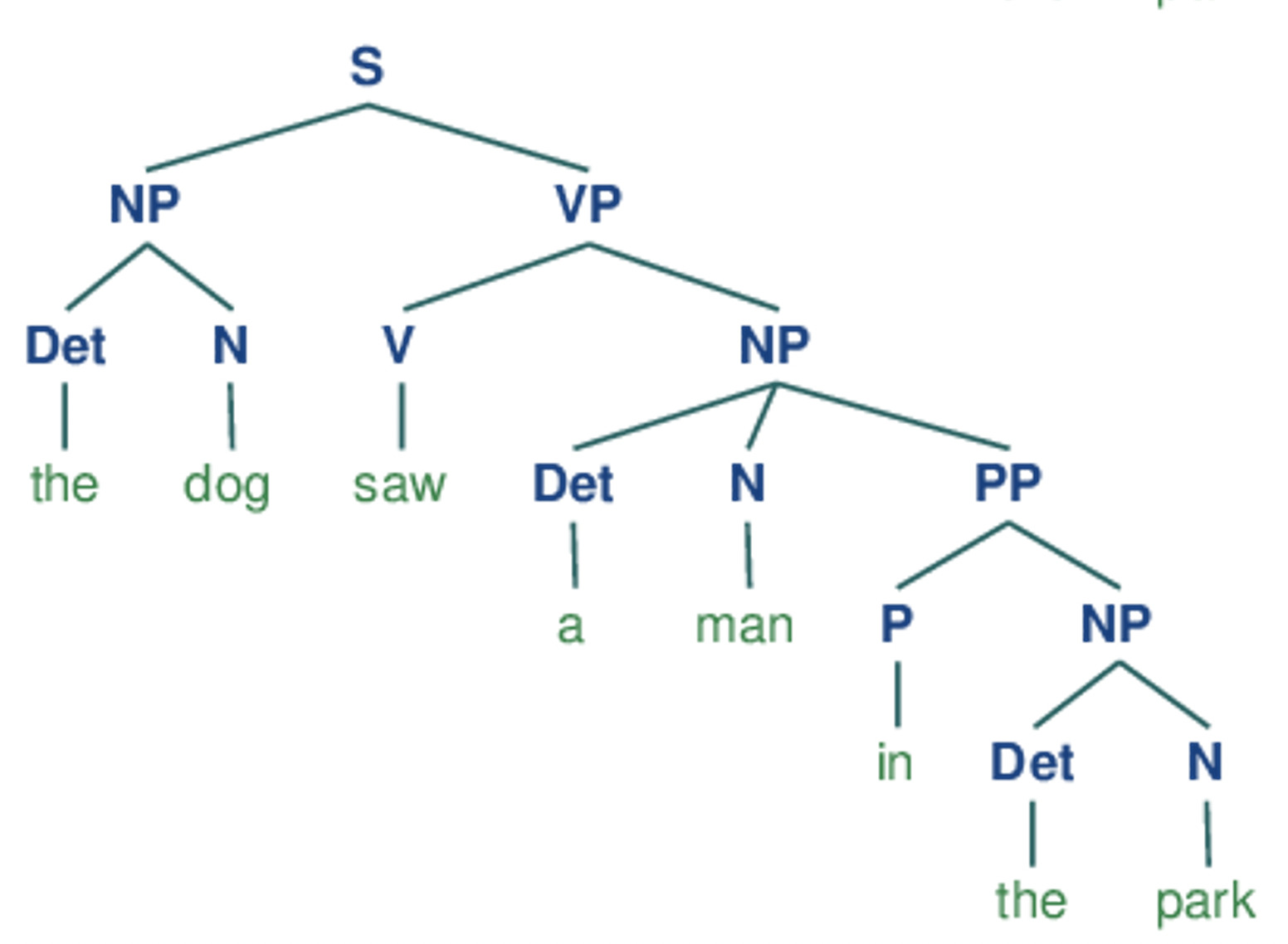

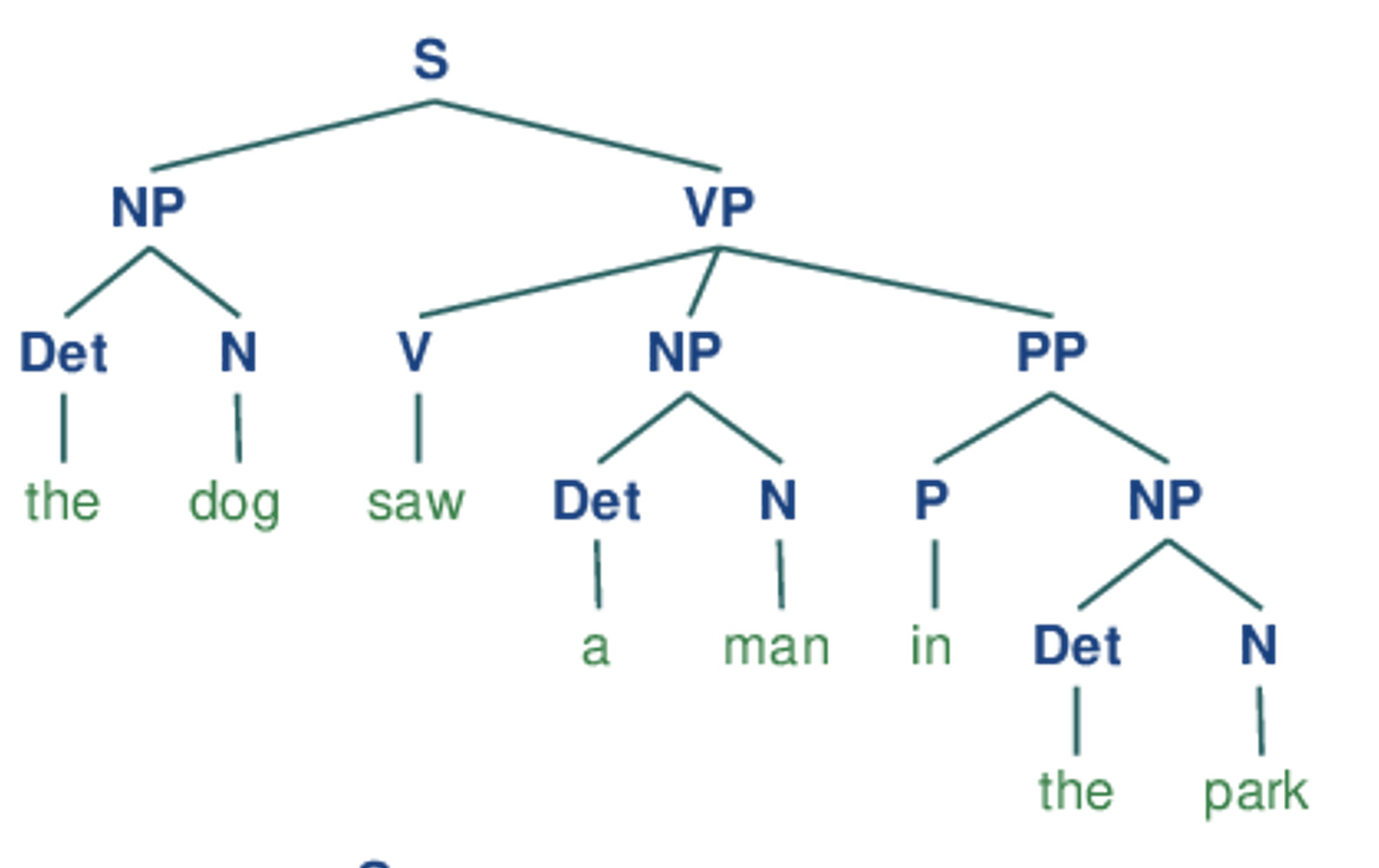

we have added grammatical category labels to the words we saw in the earlier figure. The labels NP, VP, and PP stand for noun phrase, verb phrase and prepositional phrase respectively.

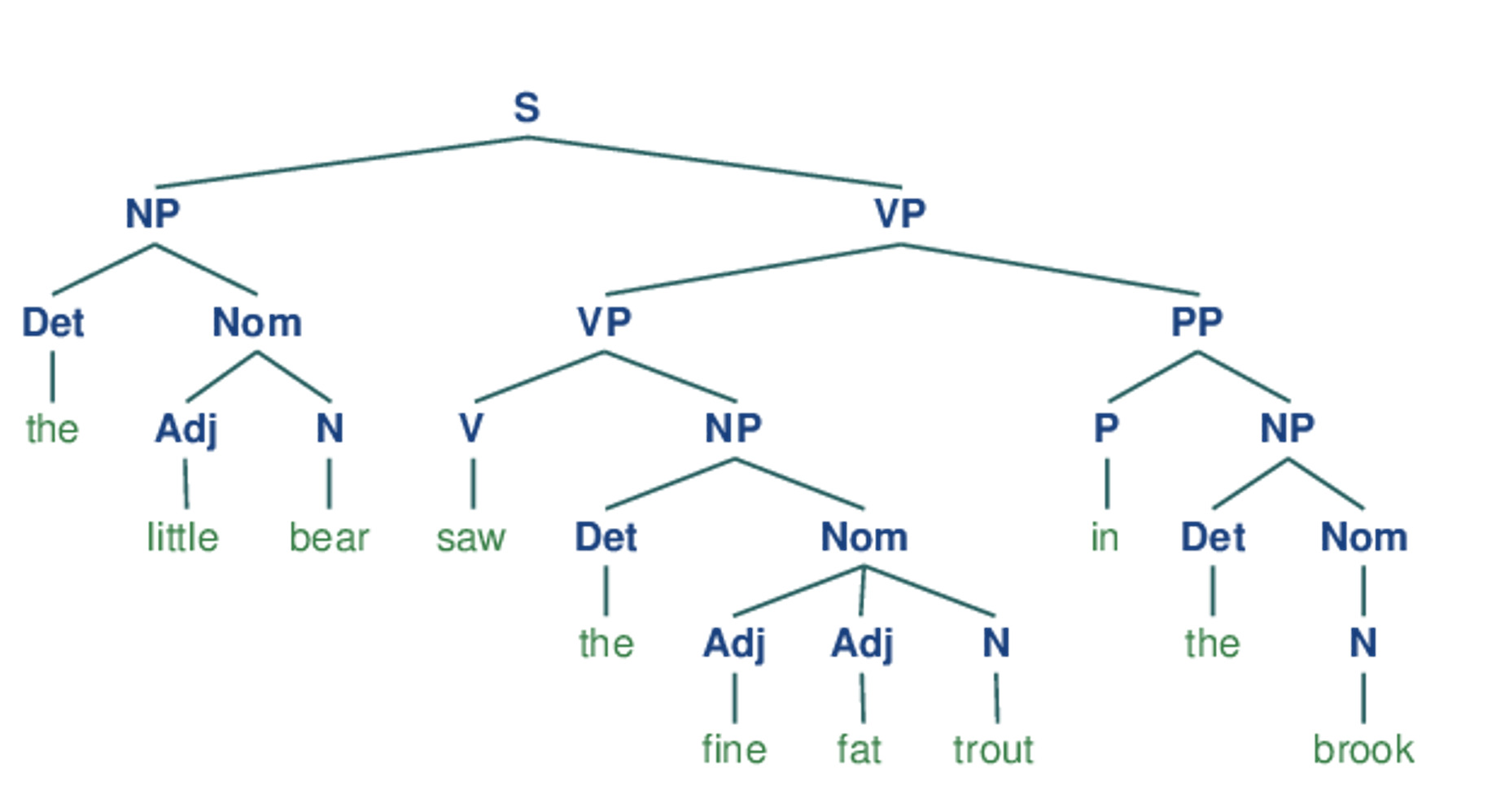

If we now strip out the words apart from the topmost row, add an S node, and flip the figure over, we end up with a standard phrase structure tree,. Each node in this tree (including the words) is called a constituent. The immediate constituents of S are NP and VP.

As we will see in the next section, a grammar specifies how the sentence can be subdivided into its immediate constituents, and how these can be further subdivided until we reach the level of individual words.

Context Free Grammar

Syntactic Categories

| Symbol | Meaning | Example |

| S | sentence | the man walked |

| NP | noun phrase | a dog |

| VP | verb phrase | saw a park |

| PP | prepositional phrase | with a telescope |

| Det | determiner | the |

| N | noun | dog |

| V | verb | walked |

| P | preposition | in |

If we parse the sentence The dog saw a man in the park using the grammar shown we end up with two trees:

a.

b.

Since our grammar licenses two trees for this sentence, the sentence is said to be structurally ambiguous. The ambiguity in question is called a prepositional phrase attachment ambiguity, as we saw earlier in this chapter. As you may recall, it is an ambiguity about attachment since the PP in the park needs to be attached to one of two places in the tree: either as a child of VP or else as a child of NP. When the PP is attached to VP, the intended interpretation is that the seeing event happened in the park. However, if the PP is attached to NP, then it was the man who was in the park, and the agent of the seeing (the dog) might have been sitting on the balcony of an apartment overlooking the park.

To Do

- Make a function based on the tree structure to construct sentences

- Predefine the different elements in attribute arrays

- Randomly select one element from each array, pass it into the function to produce a sentence, and assign this sentence to the sentence label in the GUI

If you are having any issues with this process feel free to email me any time for assitance. alynch@ucsbieee.org